Is the Rowena Road Diet a Safety Improvement? (yes)

Sat 07 September 2019

In 2012 a pedestrian was struck by a car and killed on Rowena Avenue in Silver Lake, the neighborhood in Los Angeles where I live. In 2013 the city put in place a number of safety improvements along that stretch of road, including reducing the number of car traffic lanes from four to two, adding bike lanes, and including a center-left-turn lane. The combination of these changes are termed a "road diet", intended to slow the flow of traffic and produce less hazardous conditions for users of the road.

Anybody who walks around Los Angeles as a pedestrian knows how hostile of an environment it can be, including in Silver Lake, where cars regularly exceed 40 miles per hour on mixed-use boulevards like Fountain, Hyperion, Glendale, and Rowena. Nevertheless, the reconfiguration of Rowena prompted significant backlash among local residents, who worried about reduced parking, increased congestion, and more cut-through traffic on neighborhood streets. A similar road diet along Venice Boulevard in Mar Vista led to a threat of a recall campaign of the local city council member.

Clearly, changes to the streetscape such as the Rowena road diet can inflame the community, and can effect risks for politicians. So are road diets worth it? Do they actually provide the safety improvements that they are claimed to do?

The LA Department of Transportation (LADOT) recently published a study on the results of the road diet. In the study, they claimed that the rate of auto crashes on the relevant stretch of Rowena dropped by 37%. This number was obtained by looking at the difference in the average rate of crashes per year before and after the road reconfiguration. However, the data are noisy, and there is considerable variability in the number of crashes from year to year. A road diet skeptic might take a look at the crash statistics and claim there is not enough data, that the study has not averaged over a sufficiently long time, and will soon regress to the mean.

Here we perform a more in-depth analysis of the limited data included in the LADOT report. In particular, we will construct a statistical model for crashes along Rowena, fit it to the observed data, and consider whether it shows a decrease in crashes.

We will use the Python package PyMC3 to do the modeling, and Altair to make charts. We begin by importing the Python packages needed for the analysis, namely Pandas, Altair, and PyMC3. We also customzize the Altair rendering process to make the charts render more easily on this-here blog:

import altair as alt

import pandas as pd

import pymc3

alt.data_transformers.disable_max_rows()

alt.renderers.enable('svg')

Loading the data¶

We construct a Pandas dataframe of the crash data by reading the data off of Attachment B in the LADOT report, and plot it on a bar chart:

df = pd.DataFrame({

'crashes': [10, 10, 15, 10, 13, 15, 11, 13, 11, 14, 7, 12, 7, 8, 5],

'year': list(range(2003, 2018))

})

alt.Chart(df).mark_bar().encode(x='year:O', y='crashes')

As noted above, it certainly looks like there is a decrease in the number of crashes towards the end of the chart, but it's difficult to say with certainty. So let's model it.

Bayesian Markov-Chain Monte-Carlo methods.¶

PyMC3 works by constructing a statistical model for generating your data. It then fits the model by means of Bayesian Markov-Chain Monte-Carlo (MCMC) analysis. A full explication of MCMC methods is well beyond the scope of this article, but here is a brief qualitative description of how they work: A Markov-Chain method describes a process that moves through parameter space, where the transition to each state depends only on the previous state. A Monte-Carlo method is a general term for a computational method where there are many random draws of values in performing the computation (for a fun example of this, see Buffon's needle).

Bayesian MCMC methods typically involve constructing a statistical model with some unknown parameter values. An MCMC algorithm then explores the space of these possible parameter values, testing how well each point in parameter space fits the observed data. They do this by hopping generally towards points of higher probability (roughly the Markov-chain part), with an element of randomness (roughly the Monte-Carlo part) so they don't get caught in local maxima.

As a result of this approach, Bayesian MCMC methods tend to explore not only the best model fit to the data, but also a broad region around the best fit. Therefore, they furnish not only an estimate of the best parameter values, but also an estimate of the uncertainties and tradeoffs. This estimate of the uncertainty is particularly useful to us in looking at the Rowena road diet. We are not just interested in how much the rate of crashes has decreased, but how much we can trust that number.

A model for crashes¶

We will model the rate of crashes along Rowena as a Poisson process. Poisson processes describe counting statistics which are memory-less. Events described by a such a process have fixed probability of occurring at any given time, and these events are statistically independent of each other. Poisson processes are routinely used to model earthquakes, pedestrian counts, website traffic, and, indeed, car crashes.

Now, the features of constant-rates and memorylessness are probably not completely accurate. Over the last few years, rates of car crashes, especially those involving pedestrians, have been on a persistent increase in the United States. And over time periods of seconds, car crashes are certainly not statistically unrelated (crashes beget more crashes). However, we will assume that bunched crashes on a second-time-scale are considered a single event, and that local effects on Rowena are more important than longer-term city-wide secular changes, and therefore move forward with a Poisson process.

We will consider a model where there is an early rate of collisions, a later rate of collisions, and a time point at which the rate changes from early to late. This makes for a three parameter model (early rate, late rate, and change point).

Bayesian methods require us to define prior distributions for each of the parameters for which we are fitting. These represent our prior beliefs about the values of the parameters. Sometimes the need to choose a prior is a cause of some angst for the practitioner, but I would argue that they provide much of the power and expressiveness of Bayesian methods. They allow us to build in our understanding of the physics of the model, and are a natural way to regularize the model, providing a powerful tool against overfitting.

We will make the following choices for the priors of our three parameters:

- For the change point we will assign a uniform prior from 2003 to 2017, indicating that we consider any point from 2003 to 2017 to be equally likely to contain a change.

- For the rate parameters we will choose exponential distributions. Exponential distributions are useful for representing strictly positive real number values (as the rate must be), where very high numbers are less likely than very low number. Exponential distributions are specified via a scale parameter $\lambda$ (a so-called "hyperparameter" for our model), which characterizes how quickly the probability density decays. The mean of an exponential distribution is equal to $1/\lambda$, so we will use the overall mean of the number of crashes per year on Rowena to choose the $\lambda$ for our priors on both the early and late rates.

Constructing the model with PyMC3¶

PyMC3 provides a nice API for constructing statistical models out of our chosen distributions, including ways of specifying random variables with our chosen prior distributions. We begin by declaring our rate variables, with $\lambda$ equal to one over the overall mean of the crash rate:

with pymc3.Model() as road_diet_model:

early_rate = pymc3.Exponential('early_rate', 1./df.crashes.mean())

late_rate = pymc3.Exponential('late_rate', 1./df.crashes.mean())

Next, we declare the switch point variable with a discrete uniform prior between the years of our dataset:

with road_diet_model:

switchpoint = pymc3.DiscreteUniform(

'switchpoint',

lower=df.year.min(),

upper=df.year.max()

)

We need to stitch together these random variables with a meta-random variable for the overall rate,

which switches from early_rate to late_rate after switchpoint.

This can be accomplished using the pymc utility function switch:

with road_diet_model:

rate = pymc3.math.switch(switchpoint >= df.year, early_rate, late_rate)

Finally, we need to inform the model of the observed data, which we presume have been generated using a Poisson process:

with road_diet_model:

pymc3.Poisson('crashes', rate, observed=df.crashes)

The observed argument lets the model know that these are the observations which we are trying to fit,

and it will use those observations when testing how good any particular realization is at describing the data.

This completes the specification of the statistical model of crashes on Rowena. All that remains is to conduct the MCMC sampling:

# make pymc a bit less verbose

import logging; logger = logging.getLogger('pymc3'); logger.setLevel(logging.FATAL)

with road_diet_model:

trace = pymc3.sample(50000, progressbar=False)

The resulting samples from the MCMC algorithm are stored in the trace object.

We can put those samples in a dataframe for further analysis:

trace_df = pd.DataFrame({

'switchpoint': trace['switchpoint'],

'early_rate': trace['early_rate'],

'late_rate': trace['late_rate']

})

Interpreting the results¶

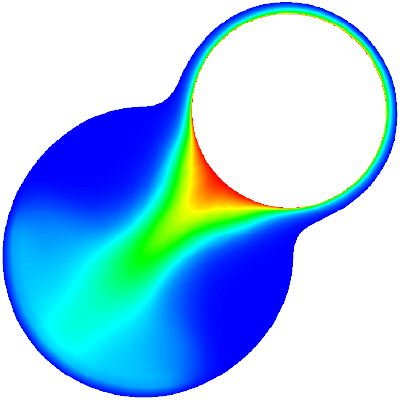

If the model has converged, then resulting samples from the MCMC trace are representative of the model's posterior distribution. Descriptive statistics and plots can therefore give us insight into our beliefs about the random variables.

The first question we want to ask is: did the rate of collisions actually change, and if so, by how much? The following histogram of our rate samples shows whether we should consider the rate to have changed:

alt.Chart(

trace_df[(trace_df.late_rate < 20) & (trace_df.early_rate < 20)]

).transform_fold(

['late_rate', 'early_rate']

).mark_area(

opacity=0.6, interpolate='step', clip=True

).encode(

alt.X('value:Q', title='Crashes/year', bin=alt.Bin(step=0.25)),

alt.Y('count()', title='Number of Samples', stack=None),

color=alt.Color('key:N', scale=alt.Scale(scheme='set1'))

).configure_axis(grid=False)

As described in the LADOT report, the later rate of crashes appears to be significantly less than the early rate. The early rate peaks at around twelve crashes per year, and the later rate peaks at around seven crashes per year. However, there is a fair amount uncertainty in the value of each of them, and there is some overlap. So while it seems likely that there was a drop, it is not a certainty.

From these samples we can also calculate the mean and standard deviations of the posteriors:

from IPython.display import Latex

display(Latex(

f"Early Rate: ${trace_df['early_rate'].mean():.1f} "

f"\pm {trace_df['early_rate'].std():.1f}$ crashes/yr"

))

display(Latex(

f"Later Rate: ${trace_df['late_rate'].mean():.1f} "

f"\pm {trace_df['late_rate'].std():.1f}$ crashes/yr"

))

We are also interested in the posterior distribution for the change point:

alt.Chart(trace_df).mark_bar(

opacity=0.6, interpolate='step', clip=True

).encode(

alt.X('switchpoint:O', title='Change point'),

alt.Y('count()', title='Number of Samples'),

color=alt.Color('key:N', scale=alt.Scale(scheme='set1'), legend=None)

).configure_axis(grid=False)

There is considerable uncertainty in when the posterior distribution for the change point, though the great majority of the probability density is in the latter portion of the time interval. The most likely year for the rates to have switched is 2014, the year after the road diet was enacted.

Finally, we would like to see if we can reproduce the top-line number from the LADOT report: by how much has the rate of crashes changed? And what is the uncertainty in that number?

trace_df['rate_diff'] = trace_df.early_rate-trace_df.late_rate

trace_df['change'] = (-trace_df.rate_diff/trace_df.early_rate)*100

alt.Chart(trace_df[trace_df.change < 100]).mark_area(

opacity=0.6, interpolate='step', clip=True

).encode(

alt.X('change:Q', title="Percent Change", bin=alt.Bin(step=5)),

alt.Y('count()', title="Samples"),

color=alt.Color('key:N', scale=alt.Scale(scheme='set1'), legend=None)

).configure_axis(grid=False)

The change in the rate of crashes is given by:

Latex(

f"Percent change in crashes/yr: ${trace_df['change'].mean():.1f} "

f"\pm {trace_df['change'].std():.1f}\%$ "

)

This is a pretty sizable decrease, and fairly close to the LADOT figure! The uncertainty, on the value is large, however, so I would be hesitant to report it to too many digits.

Finally, we can compute a 95% credible interval for the percent change, indicating the bounds beween which 95% of the samples fell:

hpd = pymc3.stats.hpd(trace_df.change)

Latex(f"95% credible interval for percent change: $ {hpd[0]}\%, \, {hpd[1]}\% $")

This indicates that the great majority of the posterior shows an overall decrease in the rate of crashes.

Conclusions¶

The results of this analysis are roughly consistent with that of the LADOT report. There was a significant decrease in the rate of collisions on the order of 30%, and that decrease roughly coincides with the installation of the road diet. And all this occurred during a period of increasing pedestrian fatalities, both locally and nationally.

There remains significant uncertainties on exactly by how much it has decreased. However, these are not a bad thing! The uncertainties are largely due to length of the elapsed time since the road diet was installed: there has not really been enough time to say with absolute confidence that the road has a lower rate of crashes. As time goes on, and more crash data is collected, we will be able to have more confidence in our conclusions. But when lives are at stake, it's worthwhile to conduct such analyses as soon as feasible, and often thereafter.

The council member for the neighborhood has since announced his support for the road diet, and it seems that it is likely to stay.